AI Memory Lock-In Is Coming — Here Is How to Stay Free

Do you want AI that remembers you, or AI that remembers you inside one app and then makes you start over the moment you switch?

Memory lock-in is real, and it is getting worse. You can stay free by separating your long-term context from the chat interface.

In this post, I will show how lock-in happens, why it is bad in practice, and how to keep your memories portable with a shared persistent memory layer.

Memory became a feature. It is now an ownership problem.

Vendors began by adding small memory behaviors: saved preferences, remembered facts, and continuity across conversations. That was a net win. It reduced repetition and improved day-to-day usability.

But memory did not stay small. Over time, it became a storage-and-retrieval layer that sits behind the UI. Once it becomes a retrieval system, it stops being a UI checkbox and starts being infrastructure. Infrastructure always has integration paths, data models, and constraints.

You can feel the difference when you try to move. Export a few notes from one product, paste them into another, and the experience no longer matches what you had before. Content moved. The retrieval behavior did not.

What memory lock-in actually means (not the marketing version).

Memory lock-in occurs when your AI’s best continuity depends on the same vendor and integration. You might export text. You might even export a summary. Yet the other tool does not retrieve it in the right way, with the right structure, at the right time.

So you do not just lose data. You lose behavior: indexing, retrieval, and the rules that decide which facts matter for the current request.

A simple diagnostic: after you switch tools, do you still re-state your constraints and project decisions? If yes, your memory is trapped.

How vendors trap your memory: three concrete mechanisms.

There are three repeatable mechanisms that create lock-in. Any one of them is frustrating. All three are why migrations feel like work instead of progress.

1) Proprietary memory representation: you export text, but not the model of how the memory should be organized and retrieved. Without that representation, imported notes behave like raw reading material rather than memory.

2) Retrieval logic coupled to the chat runtime: the memory layer is often tied to a specific product’s initialization, tool calling, and personalization pipeline. Move the content, and you lose retrieval behavior.

3) Business incentives for persistence inside the ecosystem: when persistence is the value, churn becomes expensive. Vendors may offer export. They often cannot guarantee that another product will interpret it the same way.

The annoying example: you switch tools, and your AI forgets your decisions.

You used Tool A for two months. You gave it preferences, project context, and decision history. The result felt smooth.

Then you switched to Tool B because it had a better workflow for the next job you wanted. Tool B could read your exported chats or pasted notes. It still did not behave like your Tool A AI.

The reason is not magic. Memory is not just facts. It is a retrieval-time interpretation. Tool B does not know which preferences apply to this task, which decisions override earlier ones, and which constraints are stable versus contextual. So you end up re-explaining the same stuff, and the cycle resets.

This is the practical cost of locked memory: time spent re-teaching the system, plus quality drift because the assistant guesses without your retrieval-time structure.

Import tools are a tell: why bridges confirm lock-in.

When a vendor ships an import tool, it usually signals that the underlying memory system is not naturally portable. The import exists because the original ecosystem was closed enough that you cannot reuse memory directly.

Anthropic's release of an import workflow for users migrating from ChatGPT is a clear signal of the pattern. Import helps. It also confirms the key issue: the memory ecosystem is not open by default.

A portable setup would let any compatible client access the same memory store through a stable interface. A locked setup needs conversion steps. Conversion steps become your future migration tax.

Why locked memory is bad: quality and safety.

Lock-in does not only cost time. It can also harm quality.

When memory is trapped inside a vendor product, you have limited visibility into what is stored and which retrieval rules are active. If your preferences change, the assistant may still retrieve older context. You notice the contradiction and fix it by re-explaining.

In a portable architecture, you can audit, update, and delete memory with intention. Memory becomes a living dataset you can reason about, not a black box you hope stays correct.

The real fix: separate memory from the chat interface.

Most people think the answer is bigger context windows. Bigger windows help inside one session. They do not solve the cross-tool problem.

The fix is an external memory layer with stable access. Your chat app becomes a client. Your memory becomes a shared service.

Once that separation exists, switching tools is just a matter of changing clients. Your long-term context stays available because it lives outside the UI.

A vendor evaluation checklist you can use tomorrow.

Before you trust a memory feature, test it like a dependency. Ask these questions.

1) Portability: Can you reuse stored context in another tool without rebuilding it?

2) Integration: Can you connect via an open interface such as MCP, rather than relying on a vendor-only UI?

3) Behavior: Does retrieval behave consistently across clients, or only inside one chat app?

4) Control: Can you export, audit, and delete your memories?

If any answer is uncertain, assume lock-in risk and plan for portability.

Where Kumbukum fits

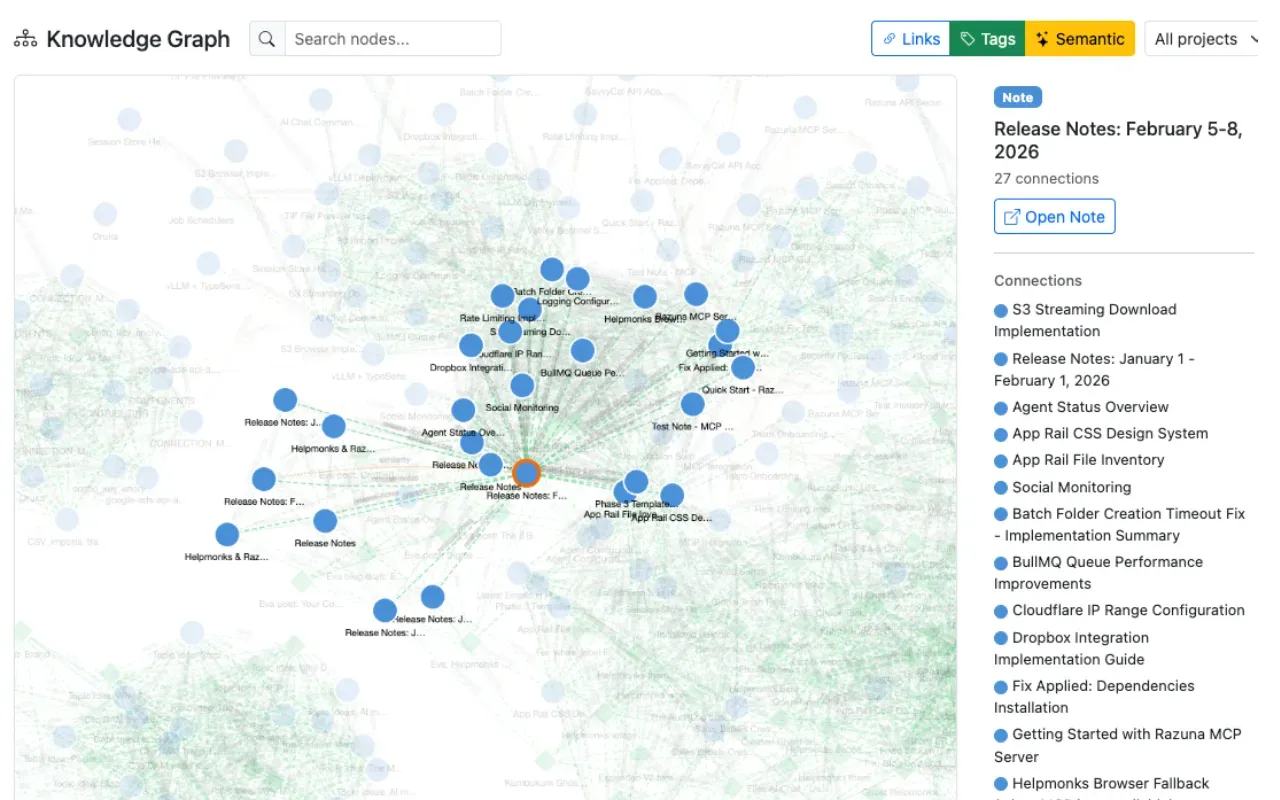

Kumbukum is a shared persistent memory layer for MCP-compatible AI tools. It is designed to keep long-term context outside any single chat app.

You connect multiple tools to the same memory layer. Store preferences and decisions once. Then let each compatible client automatically retrieve the correct context across sessions.

This is the opposite of a locked memory prison. Your AI does not start from zero every time you switch apps. Your retrieval behavior stays consistent.

Two ways to run it: hosted or lifetime

Hosted: fastest start

Hosted is for quick setup. Create an account, paste the MCP server URL and API key into your AI tool, and start storing memories. You do not manage infrastructure.

Open Source: self-hosted control

And if you care about staying free, this matters: Kumbukum is open source. You can inspect the code, self-host it, or contribute to the GitHub repository.

What to migrate first, so you do not overwork yourself

If you already have some locked memory, you do not need a perfect migration to win. Start with what prevents repeated work.

Migrate stable preferences, recurring project context, decision history, and the rules that define good output. Once those items are in the shared memory layer, most repetition disappears.

Then refine. Memory should be a living system, but it should live where you can control it.

Your move

Stop betting your workflow on one vendor's persistence layer. Build memory that follows you across tools. Try Kumbukum free.