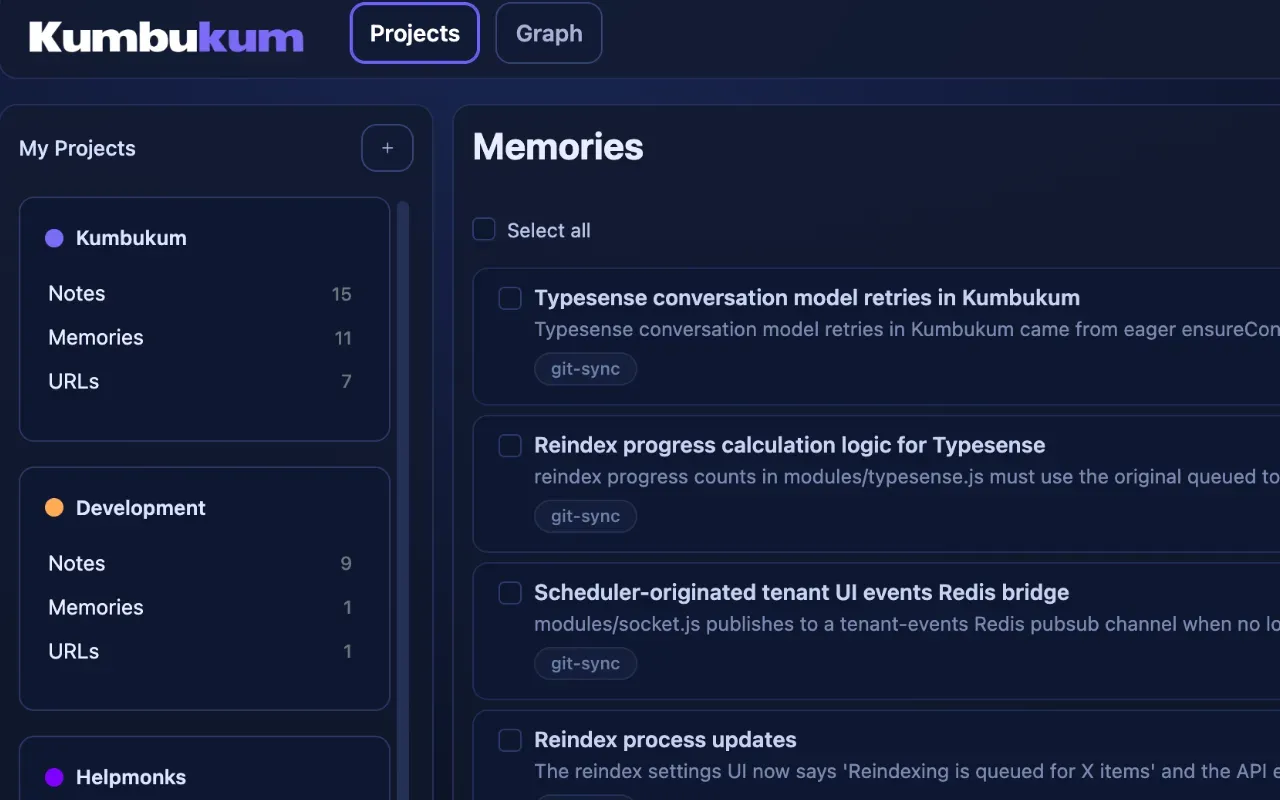

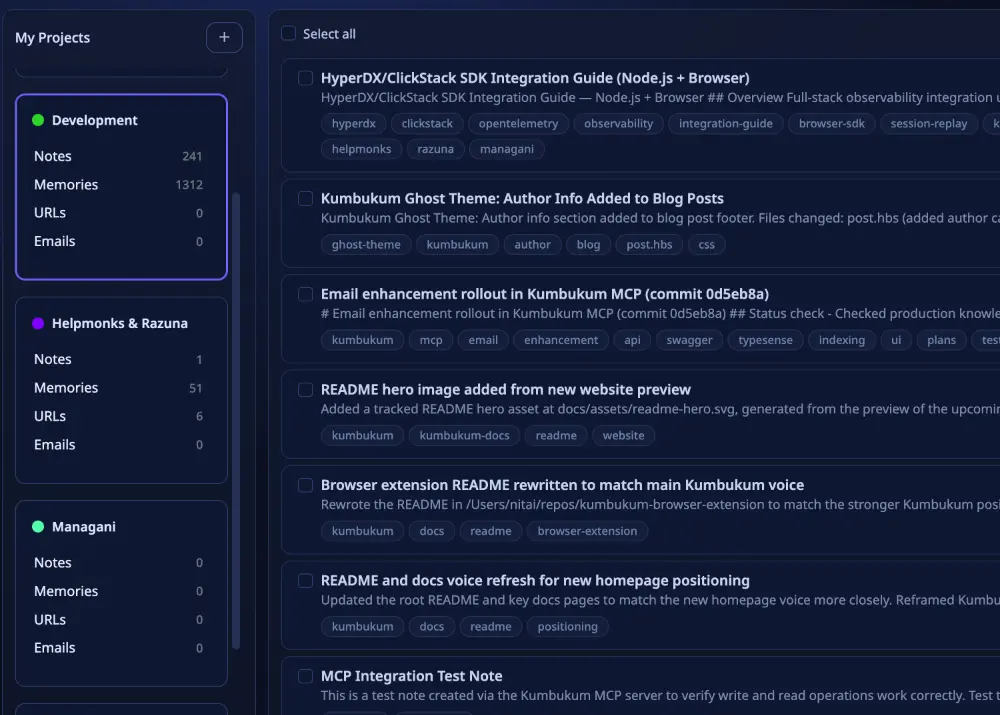

New in Kumbukum & browser extension

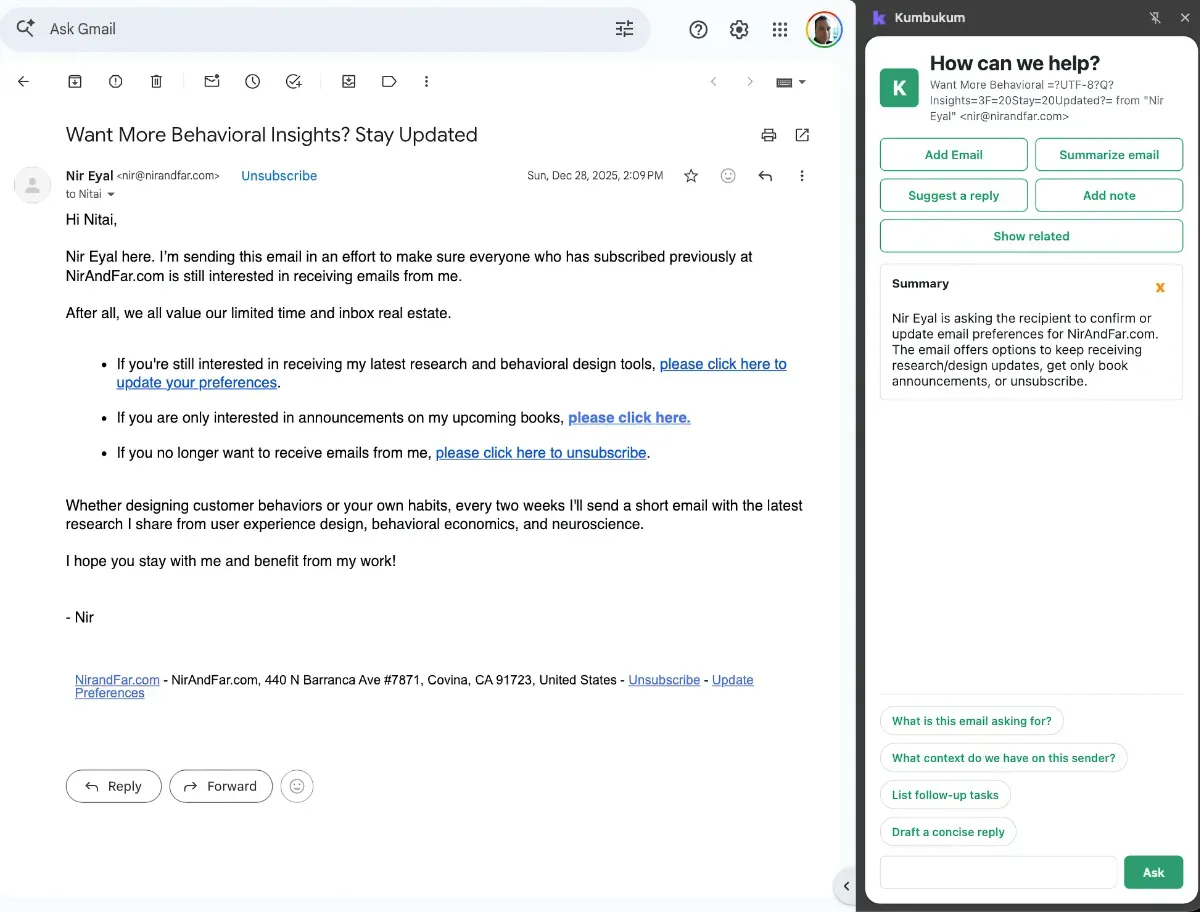

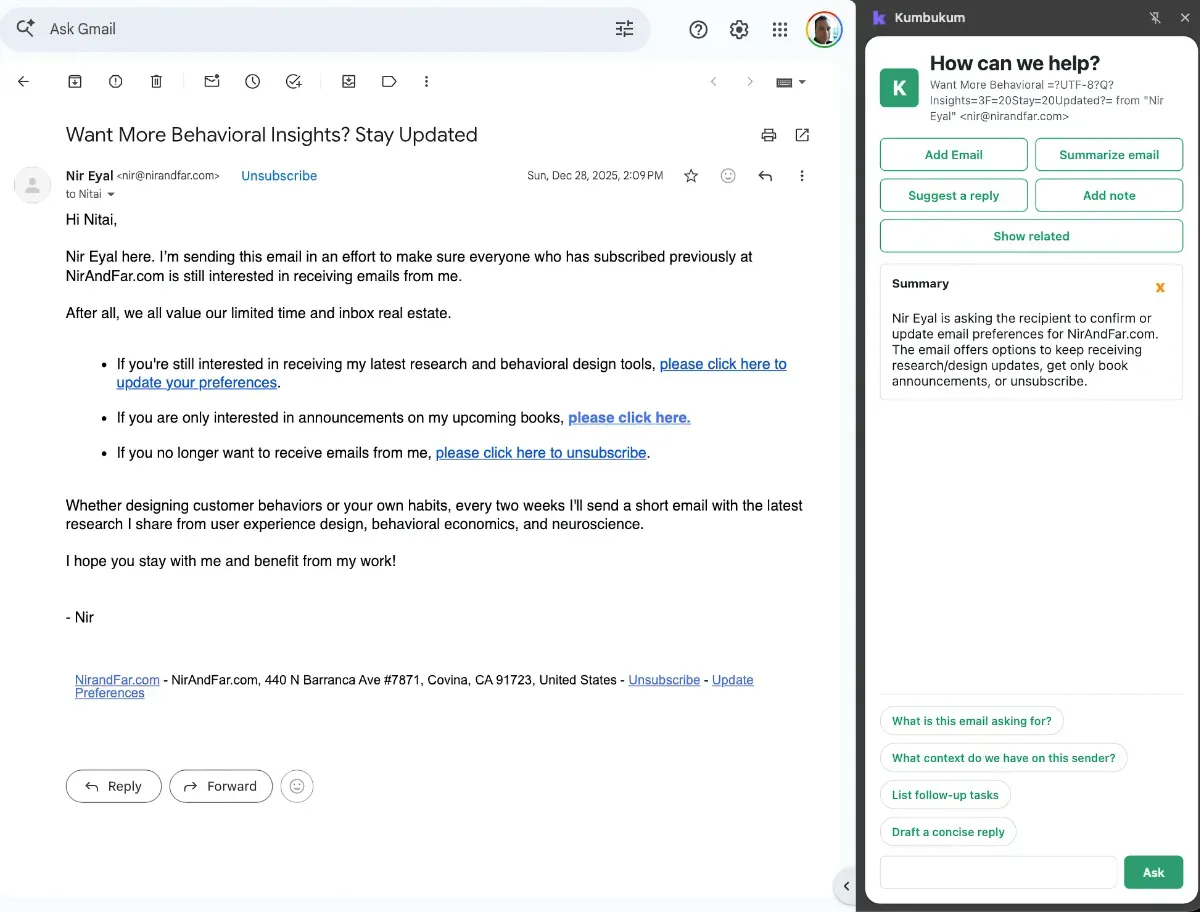

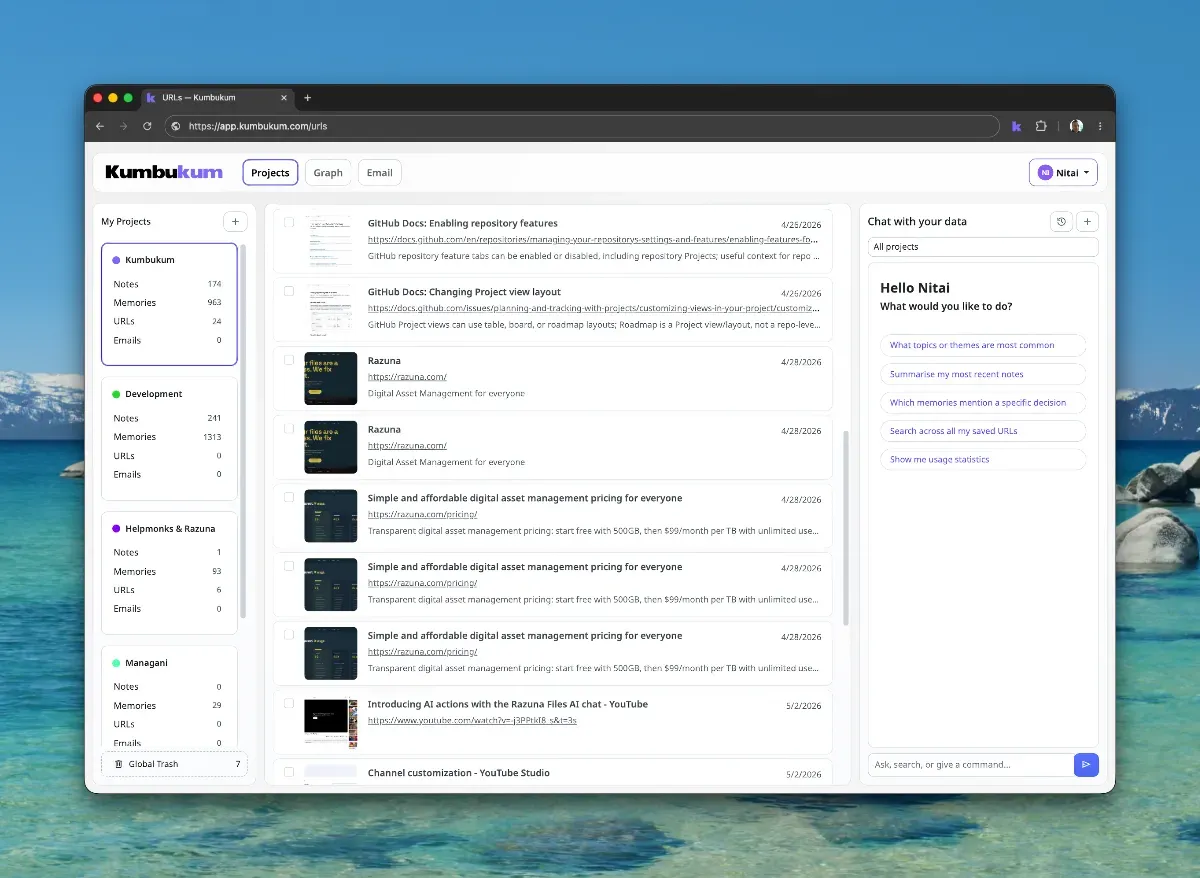

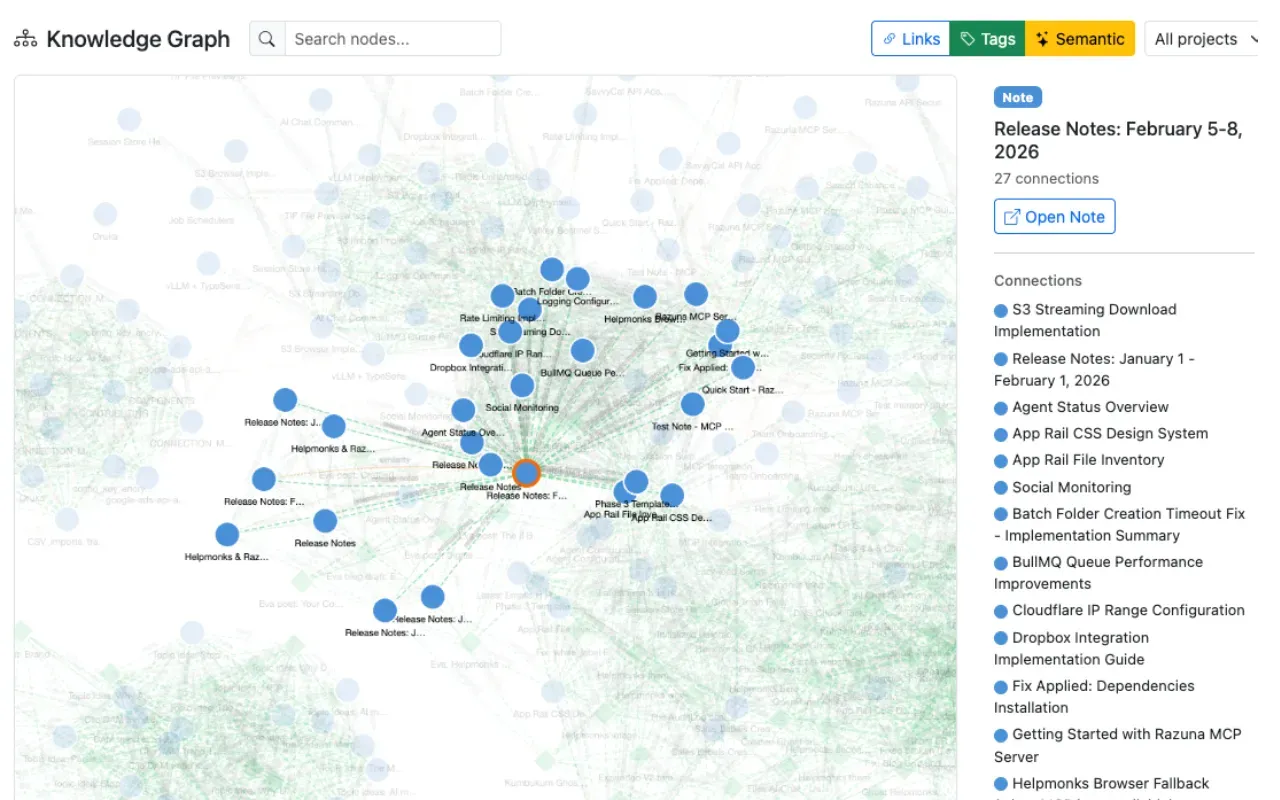

The Kumbukum Browser Extension v2 is here. auto-captures websites and email sidepanel integration. Alongside the extension, Kumbukum itself has seen a wave of improvements.

Kumbukum is an open-source shared memory layer for teams that need AI context across assistants, code review, support, email, and research. Start with the comparison hub, the MCP memory alternatives guide, or the use-case pages for support teams, code review, and AI email triage.

The Kumbukum Browser Extension v2 is here. auto-captures websites and email sidepanel integration. Alongside the extension, Kumbukum itself has seen a wave of improvements.

When critical tools like Notion go offline, business operations grind to a halt because team knowledge is trapped in a single proprietary silo. Modern teams cannot afford to have their

Traditional note-taking tools and wikis promise organization but often deliver overwhelming complexity and steep learning curves. When capturing ideas feels like a chore, valuable knowledge gets lost in the noise.

Power users waste hours every week re-explaining context to AI tools. The fresh-session handoff is a tax you should not have to pay.

The last few weeks brought a wave of plumbing work to Kumbukum — the kind of changes you don't see in screenshots but feel every time you search, sync, or open the MCP. Here's what landed.

You have Claude, ChatGPT, and Cursor open. None of them share memory. Here is what that fragmentation costs you -- and how to fix it with an open-source shared memory layer.

Stuffing your AI's context window with everything you can find does not make it smarter. It makes it worse. Here is why context rot is real -- and how curated memory fixes it.

Your AI sees the code, but not the story. Discover how capturing your repo's PR history as institutional memory can revolutionize AI-powered code review and prevent re-litigation of old decisions.

Prompt engineering got you here. Context engineering is what takes AI from generic to genuinely useful.

Native AI memory saves your preferences. It ignores your projects. Here is why that gap matters and what a real persistent memory layer looks like.

If your AI workflow depends on hand-built .cognition folders, prompt rituals, and recap files, the problem is not your discipline. The problem is the missing memory layer.

Every time you start a new Claude Code or Cursor session, you re-explain the same architecture decisions. There's a fix — and it's not a better config file.