Why Most MCP Memory Servers Make Your AI Worse

Every builder eventually hits the same wall: they add an MCP memory server, their AI slows down, responses feel bloated, and something that should feel smarter feels worse. The culprit is not persistence itself. It is how most MCP memory servers load context.

Here is the math that most people skip.

The MCP Token Overhead Problem

Every MCP tool call carries overhead. The tool definition, the function schema, the response envelope – each adds 500 to 2000 tokens to your context window before a single byte of actual memory reaches your AI. A naive MCP memory server that retrieves everything on every request does not add memory. It eats your context budget.

A thread on r/ClaudeAI put it bluntly: "PSA: MCP tool calls are eating your context window alive. Here is the math." Developers ran the numbers and found that a memory server with 20 stored items, fetching all on every message, consumed nearly 8,000 tokens per request. That is a third of the usable context window on Claude Haiku – gone before the conversation starts.

This is not theoretical. Users building on top of popular open-source memory servers reported degraded response quality, slower inference, and higher API bills -- the exact opposite of what persistent memory is supposed to deliver.

Why Naive Memory Servers Fail

Most MCP memory servers are built around a simple retrieval model: store everything, fetch everything, stuff it all into the prompt. It works in demos with five memories. It breaks in production at 500.

The core problem is undifferentiated retrieval. When memory servers dump the full store into context, the AI has to process irrelevant information alongside relevant information. This degrades answer quality in ways that are hard to debug. The model technically "has" the memory. It just cannot prioritize it.

There are three failure modes builders run into:

- Bulk injection. The server fetches all stored memories on every tool call and injects them into the system prompt. Fast to build. Catastrophic at scale.

- Static snapshots. Memory is written once and never updated. The AI works from stale context and compounds errors over time.

- No scoping. All memories from all projects get mixed together. Working on a new feature? Your AI might surface decisions from a project you finished six months ago.

These are not edge cases. They are the default behavior of most DIY memory implementations.

What Relevant-Only Retrieval Actually Means

The fix is not a bigger context window. It is a smarter retrieval layer.

Instead of fetching everything, a properly designed memory server scores memories against the current conversation, retrieves only what is semantically relevant to the active query, and respects project and session scope so unrelated context never enters the window. The result is a leaner prompt, a sharper AI response, and lower token spend -- all at the same time.

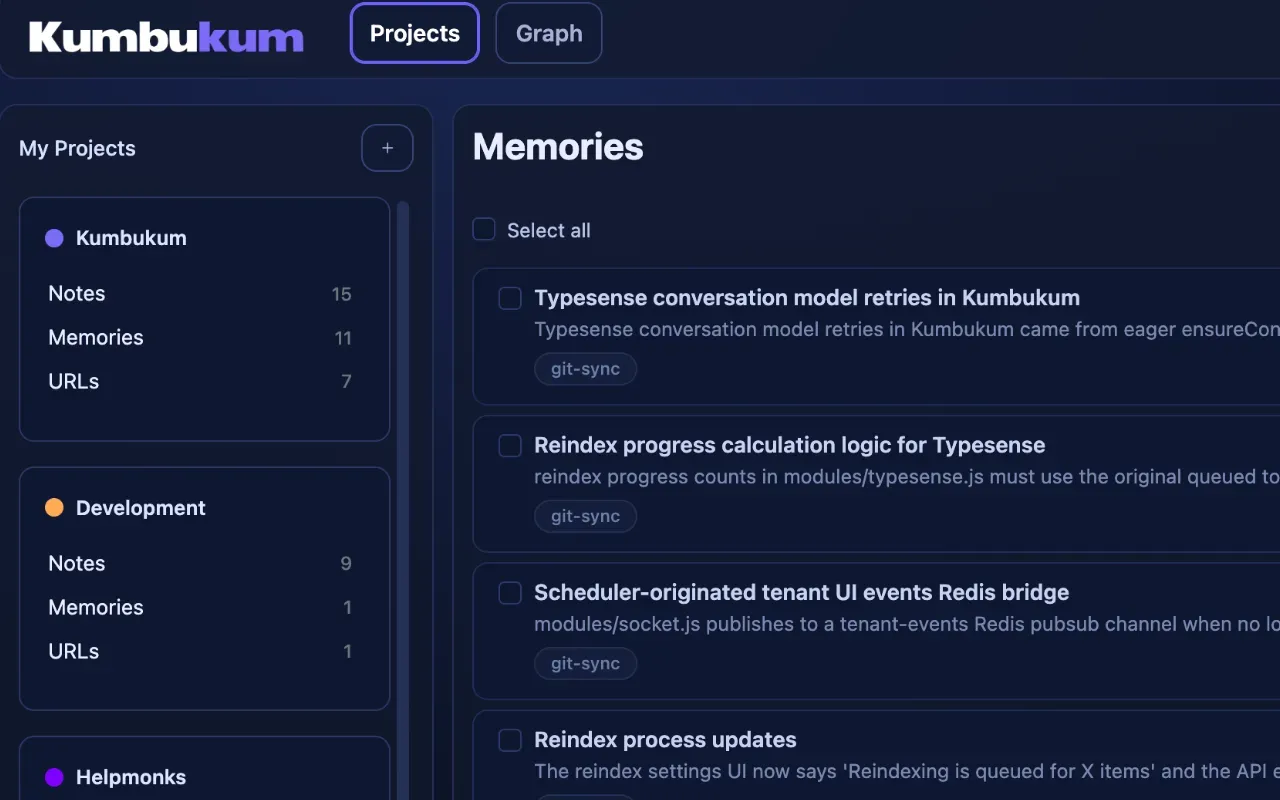

This is the design principle behind Kumbukum's persistent memory layer. Rather than dumping a flat store into the prompt, Kumbukum retrieves only the memories that match the current context. Your AI gets what it needs. Nothing more.

The principle holds regardless of which tool you are using. Claude Code, Cursor, ChatGPT, Windsurf -- if your MCP memory server is not doing relevance-ranked retrieval, you are paying a tax on every single message.

The Open-Source Benchmark Problem

The open-source AI memory space is moving fast. A wave of new MCP-native memory servers launched in early 2026 -- M3, Mnemos, Engram, Picobrain, MemPalace. Many of them benchmark well on toy datasets. Most of them have not been stress-tested against real-world context budgets.

The community is learning this the hard way. r/LocalLLaMA and r/ClaudeAI are full of builders who integrated a memory server, saw benchmark numbers that looked promising, and then watched real performance degrade in production. The gap between "memory stored" and "memory useful" is wider than most benchmarks admit.

If you are evaluating a memory server, ask these questions before you integrate:

- Does it do relevance-ranked retrieval, or does it return everything?

- What is the actual token cost per call at 100, 500, and 1000 stored memories?

- Does it support project-level scoping to prevent context bleed?

- Is the retrieval logic open and auditable?

That last question matters more than it used to. The shift toward open, transparent, builder-first tooling is not just a philosophical preference. It is a practical requirement when you are debugging why your AI suddenly started ignoring half of its memory.

What Good MCP Memory Architecture Looks Like

A memory server that actually improves AI performance has a few non-negotiable properties:

- Semantic retrieval: Match memories to the current query, not to a timestamp. The three most relevant memories from six months ago are more useful than the twenty most recent ones from this week.

- Bounded injection: Set a maximum token budget for memory injection and respect it. Never let the memory layer crowd out the actual conversation.

- Project scoping: Memories should be scoped to the project, workspace, or session they belong to. Cross-contamination is a silent failure mode.

- Transparency: You should be able to see exactly what memories were retrieved and why. A black-box memory layer is not a memory layer. It is a liability.

These requirements are why Kumbukum was built with an open, inspectable architecture. When you are debugging a production AI workflow, you cannot afford to guess at what the memory layer is doing. You need to see it.

The Real Cost of Getting This Wrong

Here is the number that changes the conversation: developers who profiled their MCP memory usage found that naive retrieval was adding 30 to 45 percent to their per-session token cost. At $15 per million tokens for Claude Sonnet, a team running 500 sessions a day is spending an extra $200 to $300 per day on tokens that are making their AI worse.

That cost is invisible until you measure it. Most teams do not measure it. They just accept that their AI feels a bit slow and a bit unfocused, and they attribute it to model limitations rather than retrieval architecture.

Fixing the retrieval layer pays for itself immediately. Fewer tokens injected means lower cost, faster responses, and better output -- because the model is not wading through noise to find signal.

You can read more about how Kumbukum approaches MCP-native persistent memory and what a scoped, relevance-ranked retrieval layer actually looks like in practice.

Build Smarter, Not Bigger

The AI memory space is maturing fast. The first generation of MCP memory servers proved the concept. The second generation is proving that architecture matters as much as the concept.

If your memory server is making your AI worse, the answer is not to remove memory. It is to demand better retrieval. Relevant-only, scoped, transparent memory is not a premium feature. It is table stakes for any production AI workflow.

Kumbukum is built for builders who want persistent AI memory that works the way memory should: quietly, accurately, and without bloating every prompt with everything you have ever done. Try it for free and see what your context window looks like when it works with you instead of against you.

Builder-first also means this is inspectable: Kumbukum is open source. You can inspect the code, self-host it, or contribute to the GitHub repository.